How to Track Your Brand Mentions in ChatGPT, Claude & Perplexity (Step-by-Step)

Every week, millions of people open ChatGPT, Claude, or Perplexity and ask questions like "What's the best CRM for small teams?" or "Which project management tools do agencies use?"

If your brand gets mentioned in those answers, you're winning new visibility you never had to pay for. If it doesn't — that traffic is going to someone else, and you'd have no idea.

That's the quiet problem with AI search. Unlike Google, where you can check your rankings in seconds, AI engines don't come with a rank tracker. Responses change based on the prompt, the platform, the phrasing, even the day. Your brand could be appearing in hundreds of conversations — or getting completely ignored — and you wouldn't know either way.

This guide walks you through exactly how to track your brand mentions across the major AI engines, what to look for when you do, and how to turn that data into action.

Why AI Search Mentions Are Different From SEO Rankings

Before we get into the how, it helps to understand why this is a distinct problem from traditional SEO.

When you check your Google rankings, you're looking at a static list. Keyword X → Position 4. Reproducible. Consistent.

AI search doesn't work like that. When someone asks ChatGPT "what's a good email marketing tool for e-commerce?", the engine synthesizes an answer from its training data and any sources it retrieves in real time. Different phrasings produce different results. ChatGPT might recommend you, Perplexity might not, and Claude might mention a competitor instead — all for questions that describe the same buyer intent.

That's why tracking AI visibility requires its own toolset. You're not tracking positions. You're tracking how often, in what context, and with what language an AI mentions your brand across a wide range of relevant prompts.

Step 1: Identify the Prompts That Matter

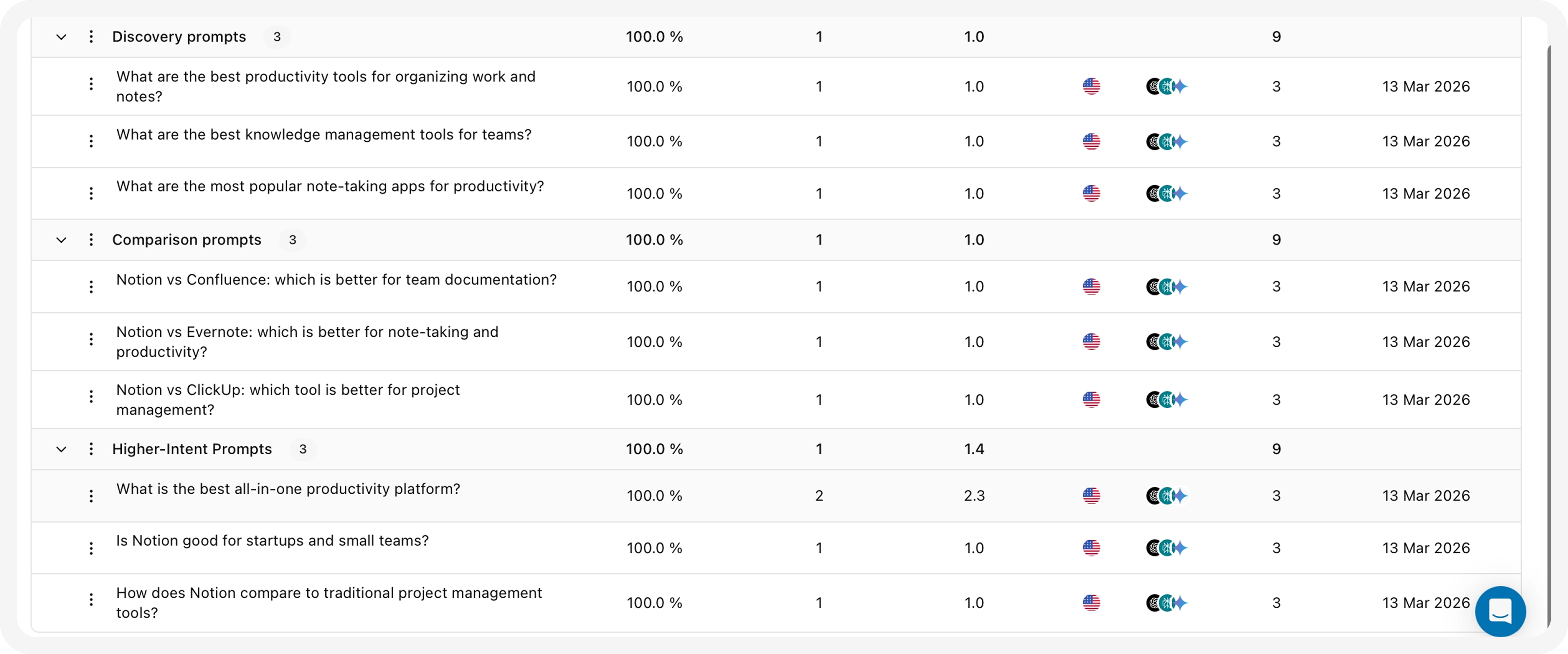

The first thing you need is a prompt library — a set of questions that your target customers are likely to ask AI engines when they're researching your category.

These aren't keywords. They're conversational questions. Think like a buyer, not a search engine:

- "What tools do SEO agencies use to track rankings?"

- "Best AI search analytics software for SaaS companies"

- "How do I know if my brand is showing up in ChatGPT?"

- "Compare [competitor name] vs alternatives"

Start with 20–30 prompts that reflect the different stages of your buyer's journey — awareness, comparison, and decision. Include both broad category questions and more specific ones that a near-purchase prospect might ask.

This prompt library becomes the core input for everything else. The more thoughtfully you build it, the more useful your tracking data will be.

Step 2: Set Up Automated Tracking Across AI Engines

Running these prompts manually is possible, but it doesn't scale. You'd have to open each AI platform, type in each prompt, copy the response, and log it — and then do it again next week because AI answers change over time.

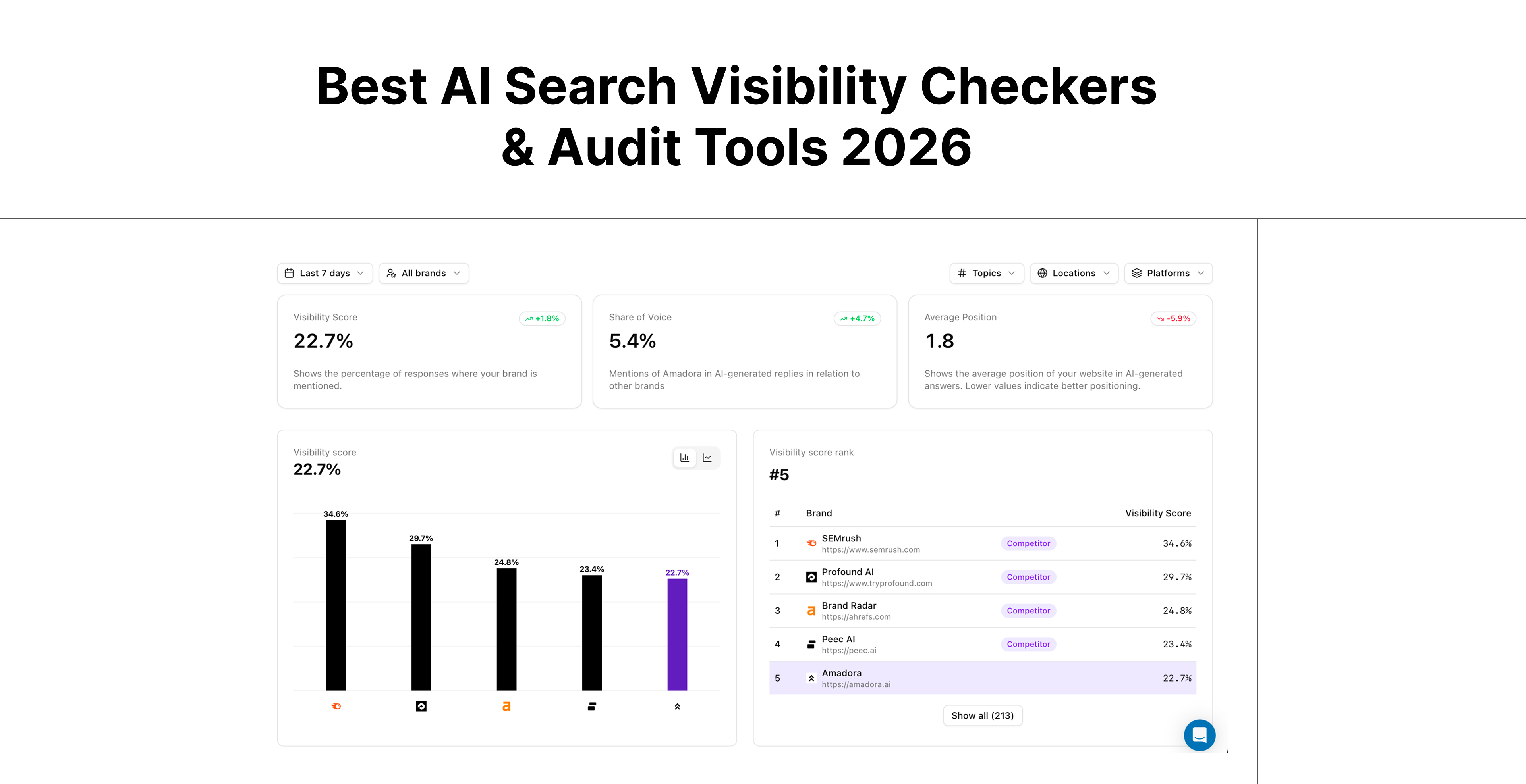

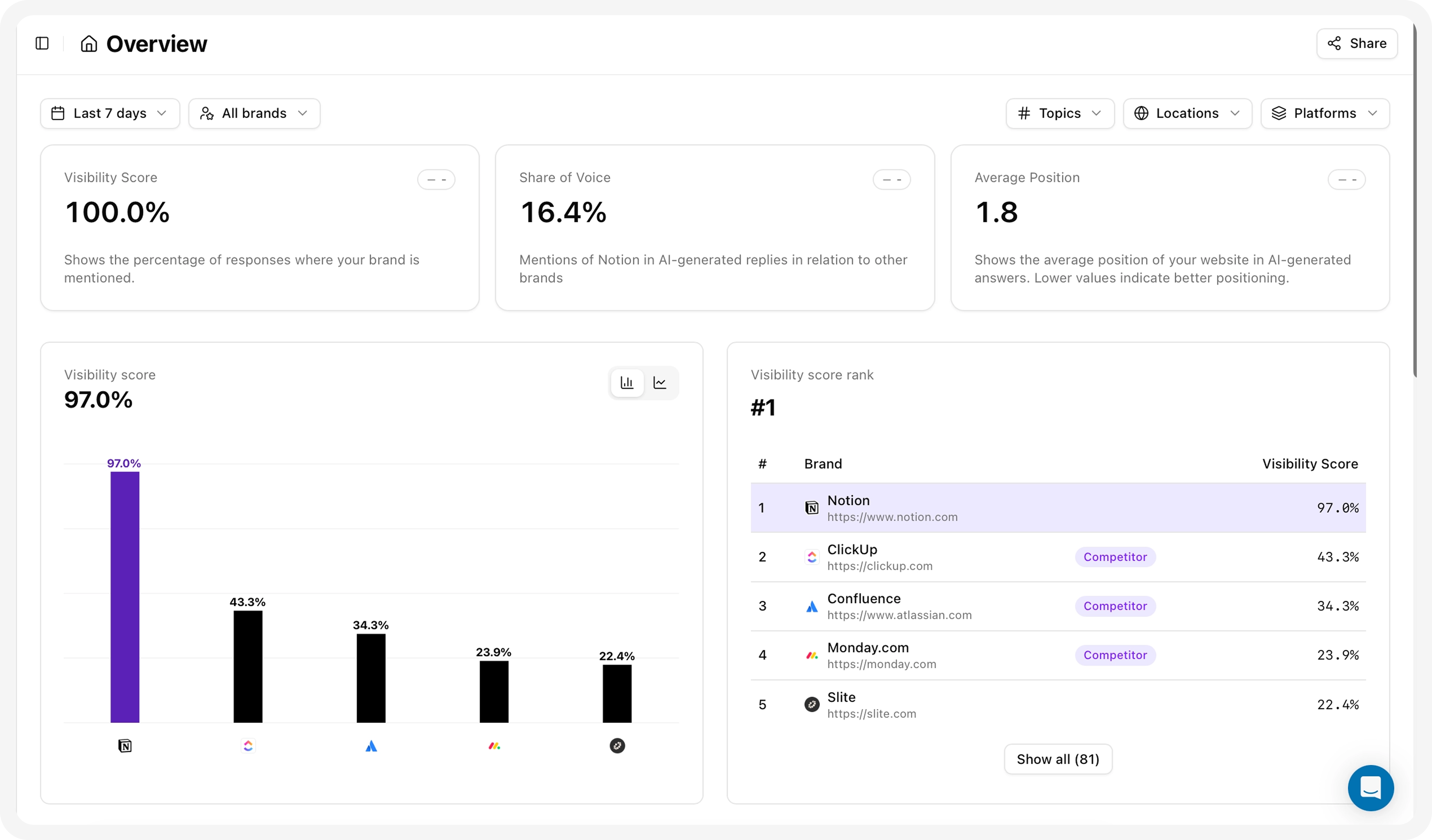

The smarter approach is to use a dedicated AI search visibility tool. Amadora AI is built specifically for this: it runs your prompt library across AI engines like ChatGPT, Google AI Overviews, Perplexity, Claude, Grok, and Copilot, then tracks where and how often your brand gets mentioned.

Here's what the setup looks like in practice:

- Connect your brand — enter your brand name and the competitors you want to monitor alongside it

- Import your prompt library — add the conversational queries you identified in Step 1

- Select your target engines — choose which AI platforms to track (most teams start with ChatGPT, Perplexity, and Google AI Overviews, then expand)

- Let it run — Amadora AI queries the engines at regular intervals and logs the responses

Once it's running, you stop doing manual checks entirely. The platform surfaces the data for you.

Step 3: Understand What You're Actually Measuring

Once your tracking is live, you'll have access to several types of data. Knowing what each one means helps you act on it.

- Visibility Score: How often you appear.

- Share of Voice: Your dominance compared to competitors.

- Average Position: Your ranking in recommendations.

- Citation Share: How frequently the AI links to your site.

Source attribution — which URLs and domains the AI engine is citing when it mentions (or ignores) your brand. This is one of the most valuable signals. AI engines don't pull answers from thin air — they lean heavily on a set of trusted sources. If none of those sources mention your brand, that explains a lot.

Response context — is the AI mentioning you positively, neutrally, or in a way that's inaccurate? Getting mentioned is a starting point, but how you're mentioned affects conversion.

Amadora AI reverse-engineers AI responses to surface this source data and give you a clear picture of what the engines are actually working from when they talk about your category.

Step 4: Audit the Gaps

With data in hand, the next step is diagnosis. Where are you showing up — and where aren't you?

Look for:

- High-intent prompts where you're invisible. If a query like "best [your category] tool for agencies" returns your three main competitors but not you, that's a priority gap.

- Prompts where a competitor consistently outranks you. This tells you something specific: they have better coverage on the sources the AI trusts for this topic.

- Prompts where you appear but the context is weak or inaccurate. Being mentioned isn't always good. If an AI describes your product incorrectly or positions you as a budget option when you're not, that needs addressing too.

The goal of this audit is to walk away with a short list of specific, high-priority gaps — not a general sense that "we need more visibility."

Step 5: Take Action on the Data

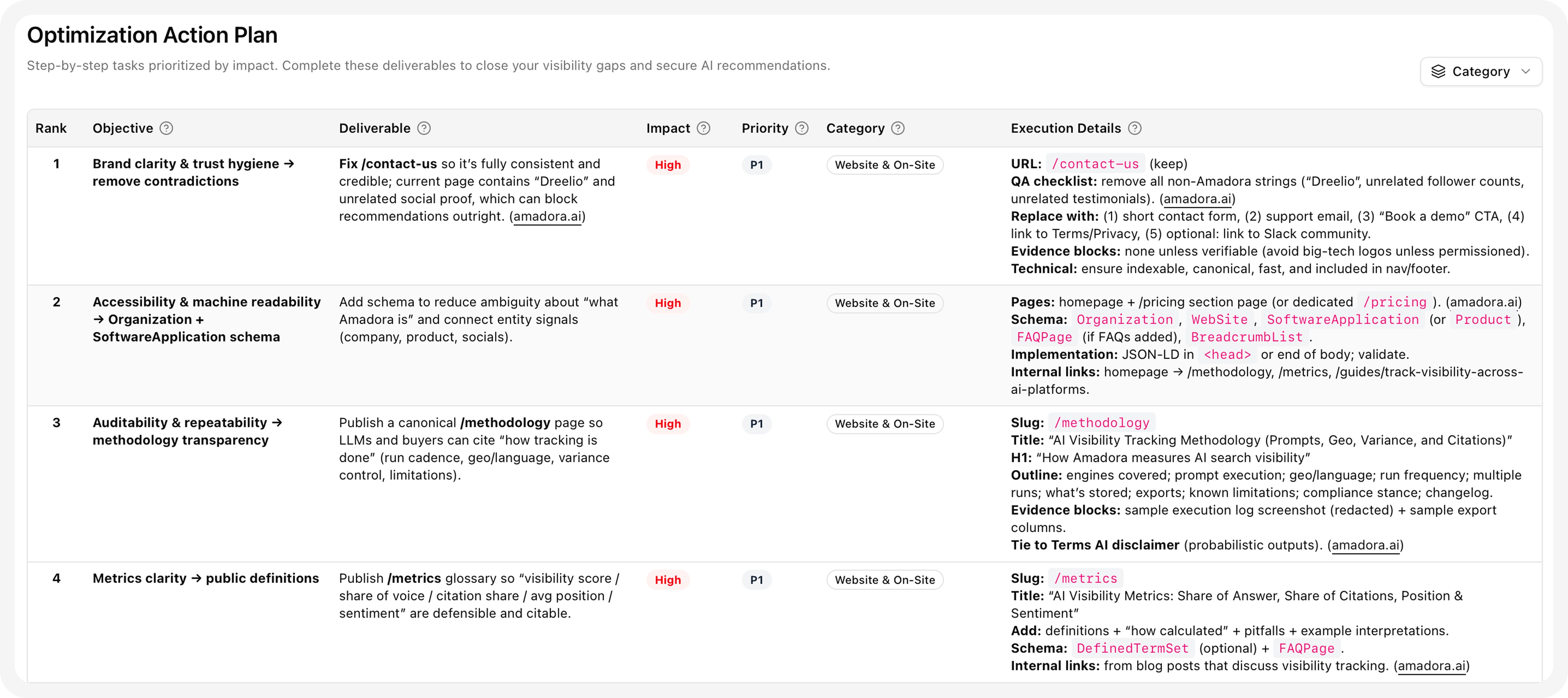

In Amadora AI, we build AI search optimization engine. It reverse engine AI replies and reveal criteria LLMs use to evaluate entities, detect optimization gaps and provide detailed actionable plan.

so after you've done the audit, you can create optimization plans for specific prompts.

and it'll give you a set of data as follows:

AI Evaluation Criteria. This reveals the exact criteria AI Models use to evaluate a brand in this specific niche for this specific brand.

Brand Gap Analysis. This compares your brand directly against the AI’s criteria.

Optimization Action Plan. This provides a step-by-step list of deliverables, prioritized by impact. Just follow the execution details on the right. Completing these specific tasks closes the visibility gaps and secures AI recommendations.

Trusted Citations shows the exact websites and domains the AI relies on to formulate answers for this specific prompt.

How Often Should You Check?

AI engines update their training and retrieval behaviors over time, and your visibility will shift. A quarterly check is the bare minimum. For most active brands, monthly is more appropriate — especially if you're actively publishing content or trying to close specific visibility gaps.

The advantage of automated tracking is that you don't have to remember to check. The data accumulates in the background, and you can spot trends over time: are your mention rates improving? Is a competitor surging on a set of prompts? Did that piece of content you published last month start showing up in AI responses?

That longitudinal view — seeing how your AI visibility changes over time — is something you simply can't get from manual checks.

A Note on Doing This Manually

If you want to start without a tool, you can. Open ChatGPT, Perplexity, and Claude. Run a handful of your most important prompts. Copy the responses into a spreadsheet and note whether your brand was mentioned, what context it appeared in, and which competitors also showed up.

It'll take a couple of hours. You'll get a useful snapshot.

But next month, when you want to compare? You'll need to do it all again, with the same prompts, across the same platforms, in the same way — otherwise the comparison isn't meaningful. And as your prompt library grows, manual tracking becomes genuinely unsustainable.

The brands that will win in AI search aren't the ones who do the best manual audit once. They're the ones who track consistently, spot changes early, and respond faster than their competitors.

The Bottom Line

AI search visibility is one of the most important (and most overlooked) channels in B2B and SaaS marketing right now. Brands are losing traffic to AI engines with zero visibility into why or what to do about it.

Tracking your mentions in ChatGPT, Claude, and Perplexity isn't complicated — but it does require a different approach than traditional SEO. Build a solid prompt library, set up automated tracking, understand what the data is telling you, and make the specific changes that will actually move your mention rate.

If you want to get started quickly, Amadora AI is designed exactly for this. It tracks your brand across all the major AI engines, shows you which sources are driving AI responses in your category, and gives you a clear action plan — not just a dashboard.